Whenever I think of ethics in Computer Science or technology in general, I am reminded of this wonderful scene from Jurassic Park where Dr. Ian Malcolm calmly disintegrates what John Hammond thinks is a genius project of bringing dinosaurs back from extinction. Ian’s high point of reasoning is this – “Your scientists were so preoccupied with whether or not they could, they didn’t stop to think if they should.”

In many ways I feel that majority of the ethical issues we see in CS related areas today are based on this basic premise. One of the hashtag feeds I closely follow on Twitter is ethicalCS. Resources and thoughts shared on there always make me reflect both as an educator and a technologist on the ethical and moral issues surrounding computing. The sheer magnitude of it is so massive that encompassing all of it in one blog post is impossible. Hence, this initial post is a small initial snapshot of what I have cobbled together from research and initiatives in academia in terms of pedagogy, collaboration, policy making and curriculum.

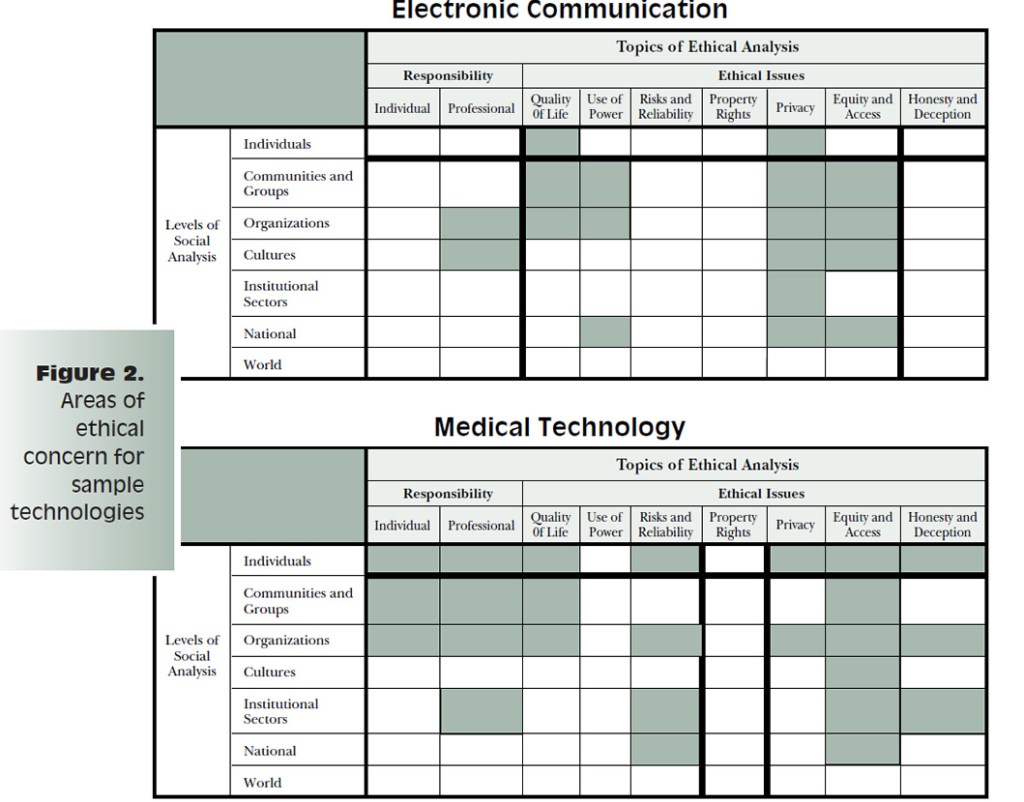

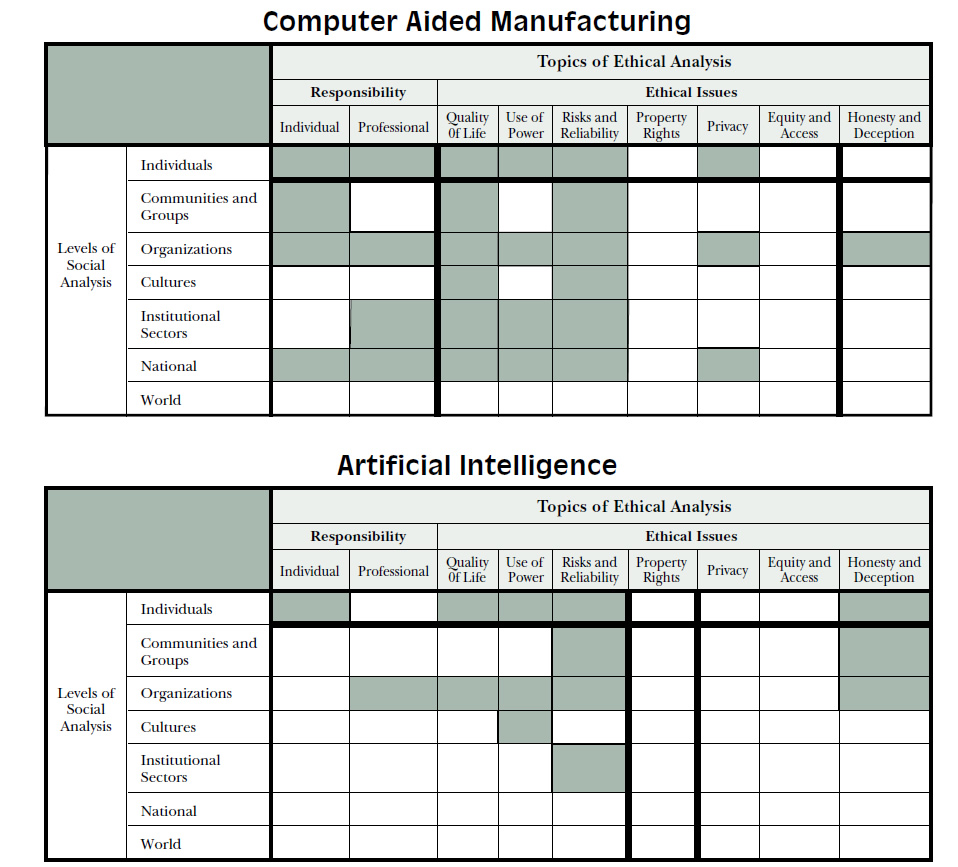

I came across a 1995 paper titled “Computing Consequences : A Framework for Teaching Ethical Computing” written by Chuck Huff and C. Dianne Martin. One of the striking features of it was how prophetic it was in saying that merely increasing the volume or magnitude of technology will not suffice in answering certain key questions of the future. The section below which examines the various areas within technology where ethical concerns need most focus is quite revealing.

From data breaches to copyright scandals, the world of technology today, fittingly, has an increasingly pivotal role to play in re-installing trust in millions of end-users. With every story of a privacy or ethics related issue in technology comes a teachable moment within our classrooms – all classrooms – that has the power to help create better decision makers for tomorrow. The issues we currently face make this ’95 paper rather iconic just because it seems to have already seen a vision of massive amounts of data and user bases being created. It predicts a world where collaboration in communities is a standard form of communication and where ethical boundaries of software design will be tested in pursuit of monetary gains.

But then, there are ethical dilemmas in computing. Whose version of right or wrong should a technology rely on? Or should it have the capacity to make its own decisions? The way AI is trying to do? If so, what are the consequences? What are the right questions to ask?

Harvard’s initiative to embed ethics as part of their computer science curriculum is a reminder that educational organisations is where the real work begins. The notion that ethics is an element to be examined after the solution has been designed and implemented is undergoing a radical shift. Their model of co-teaching where philosophy and computer science departments work together on both developing and delivering the curriculum is an interesting approach. Called Embedded EthiCS, the curriculum offers insights into issues of privacy, inclusion, censorship, and moral machines.

Another interesting example is of schools using science fiction genre as a way to include ethical discussions into their computer science curriculum. A stand out point for me in this piece was the following statement – “although science fiction isn’t much use for knowing the future, it’s underrated as a way of remaining human in the face of ceaseless change.”

The now well known model of MIT’s Moral Machine is used quite a bit in computing classrooms. The activities force students to critically question and examine their own biases and understandings of how or what ethical issues look like in a computational setting. Being given the hard choice of choosing who lives and who dies, who escapes unhurt and who is critically injured, helps start conversations that go beyond the technology and into areas of philosophy, psychology and social sciences. In a world where technological advances are constantly pushing the boundaries of what is considered ethical practice and what is labeled “safety measure”, such focused conversations can help students (and teachers!) construct better policies around computational models around ethics.

I also found this informative video clip from MIT where a panel of faculty and researchers discuss how they teach ethics and policy within their computing disciplines.

Some of the highlights from the panel’s discussion – summarized by the four speakers from Harvard, MIT, Carnegie Mellon and Cornell University/Microsoft Research Labs:

- Like anything else, addressing ethics in computing starts with leadership – both in technology and in policy management. MIT’s role work with Net Neutrality is an important example in this direction.

- Ethics needs to be a foundational element at the core of all system designs (such as Harvard’s embedded program) – curriculum, pedagogy, products.

- Bringing together students from different subjects to collaborate – by explicitly teaching them how to – on various projects that allow for systems to interact with humans.

- Along with pedagogy and curriculum, state based policies, licenses, contracts that go with computational projects are equally important to explicitly ensure that ethical aspects are embedded in both the design and development.

Closing thoughts

I had started writing this blog post in a relatively pre-Covid19 era. I make it seem like a long time ago but I mean early January 2020. So much has changed around the world in just three months. While all of us struggle to deal with this daily developing situation inside and outside our classrooms. the amount of ethical issues that continue to plague us increases unabated. So if anything it is now more important to constantly reflect on these issues as teachers and students. Given the wide landscape of work in this area, I intend to keep revisiting it in the future with more insights, research and findings from experts from around the world.

Interesting research projects

- Gender Shades: Student’s Masters thesis research project on the bias in facial recognition algorithms in gender and race.

- AI Doctor: An MIT professor uses a collection of 90,000 breast x-rays to create software for predicting a patient’s cancer risk.

Other recommended reading

Teaching Ethical Issues in Computer Science: What Worked and What Didn’t.

Brown CS makes responsible Computer Science an integral part of its undergraduate programs.

Building AI Ethics education into Computer Science classes

Weaving ethics into Columbia’s Computer Science curriculum

The ethical dilemma we face on AI and autonomous tech | Christine Fox | TEDxMidAtlantic